Copyright © 2010 David Schmidt

Chapter 0:

An Introduction to Language Paradigms

- 0.1 Software-architecture paradigms

- 0.2 Programming paradigms

- 0.3 Programming language anatomy

- 0.4 Designing your own language to fit the job

A paradigm is a pattern or stylistic approach to doing

or stating something. For example, when you prepare your

resumé, you use a standard pattern, a paradigm, for

formatting it. Another paradigm is the layout of the engine

in a car --- although there are many different models of car,

virtually all of them use the same design for their

internal-combustion engine, frame, wheels, etc.

Paradigms are useful to architects, who

design and build skyscrapers with one paradigm and design wood-frame,

family residences with another. Writers use paradigms for

short stories, novels, and newspaper stories.

Paradigms are used by scientists and engineers, too.

Paradigms are useful to computer hardware --- there are standard

chip layouts, network layouts, etc.

And there are paradigms for computer software:

0.1 Software-architecture paradigms

Before we consider programming languages and their paradigms, we review

the paradigms

(architectures) for building software systems.

The material that follows is taken from a longer

presentation,

Software architecture: an informal introduction,

which you are welcome to read.

In CIS501, you drew blueprints --- class diagrams and object diagrams ---

that showed the architecture of systems you built: card games,

graphical toys, database systems.

There

are several standard architectures for software systems:

-

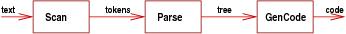

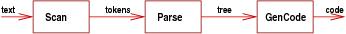

Sequential architecture: a program's components

are connected in a straight

line and communicate by sending entire files or packets of information from

one component to the next:

This layout is used for "batch" programs like payroll or a C-compiler,

which do work in stages, one at a time.

It is also useful for real-time monitoring programs, like a heart pacemaker,

which accept a stream of input data and emit a stream of outputs.

This layout is used for "batch" programs like payroll or a C-compiler,

which do work in stages, one at a time.

It is also useful for real-time monitoring programs, like a heart pacemaker,

which accept a stream of input data and emit a stream of outputs.

-

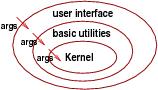

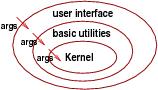

Hierarchical (tiered) architecture: components are organized in

levels/layers, and calls are made

strictly downwards from one level to the lower, adjacent level.

The layout is drawn like an onion, or a tree, or a mobile:

This layout is standard for systems software (e.g., operating systems,

device drivers, protocol code)

where the lowest level (the ''kernel'') is at the hardware,

and each level talks only to the level just below.

Testing is done

from the lowest level upwards. This architecture makes it

easy to port software from one hardware platform to another --- just rewrite

the lowest, kernel layer.

This layout is standard for systems software (e.g., operating systems,

device drivers, protocol code)

where the lowest level (the ''kernel'') is at the hardware,

and each level talks only to the level just below.

Testing is done

from the lowest level upwards. This architecture makes it

easy to port software from one hardware platform to another --- just rewrite

the lowest, kernel layer.

The architecture also appears in single-user systems where there is a clear division ("tiers") between data structures, controllers, and user interfaces.

-

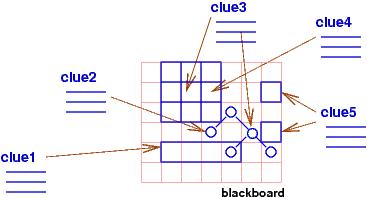

Repository architecture: one component acts a central store ("database") for information,

and the other components communicate by reading from and writing to

the central store.

This layout is useful for database and blackboard systems, where many

agents/users contribute and share knowlege.

It is also common for IDEs (like Eclipse and Visual Studio), where

the program being developed/edited is kept in the "database", and the syntax

checking, compiling, and linking processes "plug into" the database.

This layout is useful for database and blackboard systems, where many

agents/users contribute and share knowlege.

It is also common for IDEs (like Eclipse and Visual Studio), where

the program being developed/edited is kept in the "database", and the syntax

checking, compiling, and linking processes "plug into" the database.

-

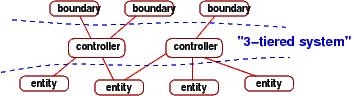

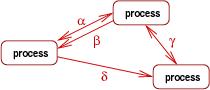

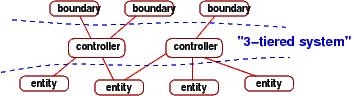

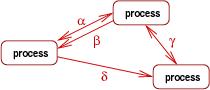

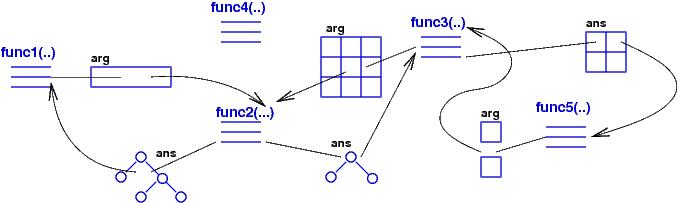

Independent-compoent systems: no component is ``in charge'' of the computation.

Indeed, the

components might be

distributed across distinct hardwares. Components execute ("react") when

they receive

input, which might be events, signals, messages, or remote calls:

This format is used for

reactive systems (software with GUIs), distributed systems (multi-player games)

and networks (client-server and peer-to-peer).

This format is used for

reactive systems (software with GUIs), distributed systems (multi-player games)

and networks (client-server and peer-to-peer).

-

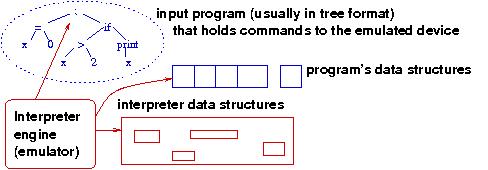

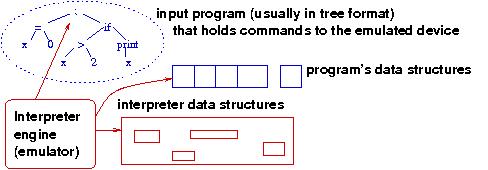

Virtual machine (interpreter) architecture:

In the 1950s and 1960s, each new machine design was implemented with chips and wires --- this is too expensive to do today.

Like ``virtual reality'', a virtual machine

simulates a new hardware device/machine in software by reading and emulating

the commands to the device:

Every modern computer chip contains a firmware virtual machine

that

simulates an elaborate machine language. When you write machine

code for the chip, you are actually writing code that is interpreted by the

chip's firmware virtual machine.

Every modern computer chip contains a firmware virtual machine

that

simulates an elaborate machine language. When you write machine

code for the chip, you are actually writing code that is interpreted by the

chip's firmware virtual machine.

This same idea is used

for language processing, where custom languages are implemented as

interpreters written in existing languages. (Example: an implementation of

Ruby as a C-coded interpreter or an implementation of a gaming language on top of Ruby.)

Any application software that requires an input language of words (and not just

point-and-click) uses a virtual machine to read the language.

You will learn to build virtual machines in this course.

0.2 Programming paradigms

Hardware has paradigms; software has paradigms. Software is written

in programming languages, and programming languages use paradigms.

This course covers three standard paradigms:

-

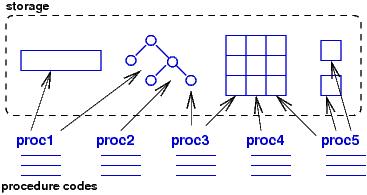

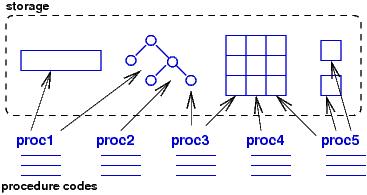

imperative (procedural) programming:

This is the style most people learn first: variables, assignments,

loops. A problem is solved by dividing its data into

small bits (variables) that are updated by assignments.

Since the data is broken into small bits, updating goes

in stages, repeating assignments over and over, using loops.

Do we do everyday tasks this way? Really, no.

This programming paradigm comes from 1950's computer hardware ---

it's all about reading and resetting

hardware registers.

It's no accident that the most popular imperative language, C,

is a language for systems software.

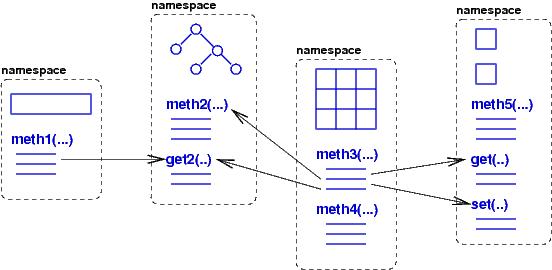

Now, object languages like Java and C# rely on variables and assignments,

but the languages try to "divide up" computer memory so that variables

are "owned" by objects, and each object is a kind of "memory region" with its

own "assignments", called methods.

This is a half-step in the direction of making us forget about 1950's computers.

-

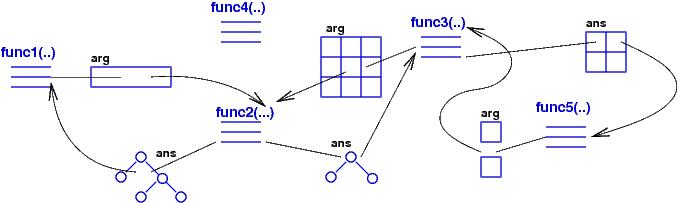

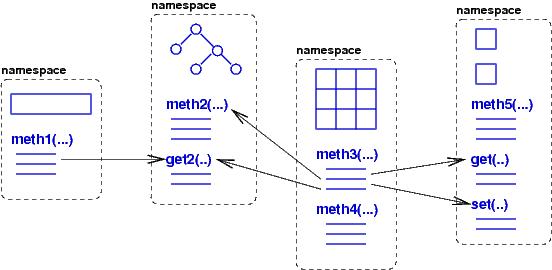

Declarative (functional) programming:

The imperative paradigm requires

memory that is updated by assignments; it is single-machine programming.

This approach is

impossible for a distributed system, a network, the

internet, the web. A node might own private memory, but sharing and assignment to it cause race conditions. Indeed, there isn't even a global clock in such systems!

Declarative programming dispenses with memory and assignments.

All data is message-like or parameter-like, copied and passed from one component to the next.

Since there are no assignments, command sequencing ("do this line first, do this line second, etc.") becomes unimportant and can even be discarded.

The result is a kind of "programming algebra":

Think back to algebra class, where you wrote a set of simultaneous equations to define an answer to a problem --- the equations were definitions and their ordering didn't matter. Algebra is a declarative programming language.

If you work with physicists, mathematicians, and chemists,

you find that they think in terms of equations, and they define

solutions to problems in equations.

The declarative paradigm works this way: you program an answer

to a problem as a kind-of equation set that uses complex input parameters ("messages") to calculate

outputs.

Since there is no memory, data values (parameters) must be primitives (ints, strings) and also data structures (sequences, tables, trees).

Components pass these complex parameter data structures to each other.

There are no race conditions because there are no global variables --- all information is a parameter or a returned answer.

This paradigm applies both to a program ("function") that lives on one machine and also

to a distributed system of programs --- parameters replace memory.

-

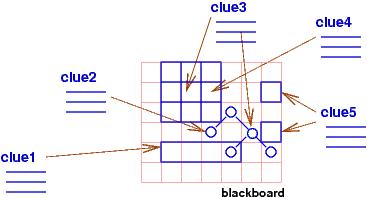

logical (constraint solving) programming:

When you solve a crossword puzzle or a Sudoku puzzle,

you are confronted with a gameboard of squares and

a set of clues. (In Sudoku, the clues are values already

inserted in some of the squares.) You solve the puzzle

by placing values in the squares so that they fit the clues.

Ultimately,

there is a ``correct'' value for each square, and once you

find the value, it stays in the square permanently. The puzzle

is solved when all the squares are filled correctly to match the clues.

The structure of the puzzle and the clues are literally

a program, because they are constraints for which the

computer searches for a solution. In the logical/constraint

programming paradigm,

a program is a set of

constraints, written as logical assertions, and a computation

finds values for the variables mentioned in the logical assertions

so that all the assertions are made true.

The cells of the puzzle are called logical variables.

The logical assertions are

the clues to the puzzle. The computation tries various

combinations of values to bind to the logical variables,

trying to make true the logical assertions.

For example, 2 + 1 = X is a program (constraint) whose output is X = 3,

and X = X * X is a program whose output can be either X = 0 or

X = 1.

The logical-programming paradigm is useful for solving problems ("queries")

in data bases, knowledge discovery, and learning:

a query to the database

is a logical assertion with variables that must receive values to

answer the query. (Think about the SQL programs you have written.)

The paradigm was invented by computer scientists

who studied resolution-based theorem proving; they noticed that a

side-effect of constructing logic proofs that contained existential

quantifiers, ∃X P(X), was that answers, a, for X were computed

that made P(a) hold true. From here, it was a small step to adapting

logic as a language for specifying computation of answers for such

X.

This paradigm uses a shared, global memory, but once a correct answer is inserted into memory, it cannot be changed by destructive assignment.

This eliminates race conditions and allows massive parallelism.

(Think about how two people can work at the same time on the same crossword puzzle.)

0.3 Programming language anatomy

Although Prolog looks radically different from C, which looks

radically different from ML or Smalltalk, all programming languages

are languages, and languages have standard foundations.

There is a traditional list of these foundations, which states

that every language has a

-

syntax: how the words, phrases, and sentences (commands)

are spelled.

-

semantics: what the syntax means in terms of nouns, verbs,

adjectives, and adverbs.

-

pragmatics: what application areas are handled by the language

and what is the (virtual) machine that executes the language.

To understand syntax, we will learn a notation

for stating syntactic

structure: grammar notation. This comes in the next chapter.

To understand semantics, we will learn about semantic domains

of expressible, denotable, and storable values.

We will also learn extension principles that enrich

the semantic domains. Finally, we will see

how languages like Java, ML, and Prolog grow from ``core''

grammars and domains by means of the extension principles.

To understand pragmatics, we will learn the standard virtual machines for

computing programs in a language. The virtual machines might use variable

cells, or objects, or algebra equations, or even

logic laws. In any case, the machines compute execution traces

of programs and show how the language is useful.

0.4 Designing your own language to fit the job

There is another reason why we study the material

in this course: When you are established at a firm or a lab,

you will become expert at solving problems in one application

area, one domain. You will find that your solutions

often match a particular software architecture and

a particular programming paradigm.

You will develop a library of components

that you reuse when you build multiple, similar solutions to similar problems

in the domain.

You will reach the point where it will be useful to share

your knowledge, your libraries, your styles with others.

At this point, you might wish to develop a language that is specialized

to the domain. Such a language is called a

domain-specific language. You use the language to talk

about programs and their solutions. If you can state solutions

(algorithms) in your domain-specific language, and if a computer

can understand your domain-specific language (that is, you write an

interpreter --- virtual machine --- for your domain-specific language), then it is

a domain-specific programming language.

Most of us will never design a language like C or Java, but many of us

have designed or will design domain-specific languages to solve

a narrow class of problems in a specialty domain. If you want your

language to be good quality, you must learn the concepts

in this course about syntax, semantics, and pragmatics --- you will rely

on all three to design a useful domain-specific language.

You have probably hacked code in HTML --- it's a domain-specific

language for web-page layout. You might have used a gamer package

to create an interactive game;

you have thus used a domain-specific language for gaming.

Excell is a clever, text-plus-graphics domain-specific language for

spreadsheet construction. make is a hugely useful little language

for importing and linking C-files into one huge C-program.

And so it goes. Indeed, any nontrivial, grammatical input format for an application

is a domain-specific language, with its own syntax, semantics, and pragmatics ---

language design and systems design go hand in hand.