A paradigm is a pattern or stylistic approach to doing or stating something. For example, when you prepare your resumé, you use a standard pattern, a paradigm, for formatting it. Another paradigm is the layout of the engine in a car --- although there are many different models of car, virtually all of them use the same design for their internal-combustion engine. Paradigms are useful to architects, who design and build skyscrapers with one paradigm and wood-frame, family residences with another. Writers use paradigms for short stories, novels, and newspaper stories. Paradigms are used by scientists and engineers, too.

Paradigms are useful to computer hardware --- there are standard chip layouts, network layouts, etc. --- and they are also useful to software architects, who lay out components in patterns that work well for specific applications. In the next section, we review some paradigms for building large software systems.

There are also paradigms for thinking about and solving problems. We call these programming (language) paradigms. One of the purposes of this course is to expose you to several programming paradigms, and in this chapter we learn why this is useful.

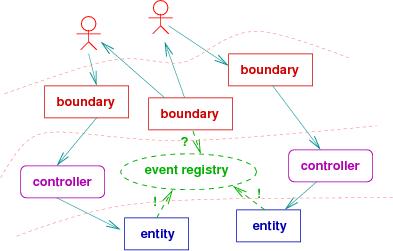

When a complex piece of software is designed, coded, and assembled, there must be some architectural plan that is followed. If you have completed the CIS501 course on software architecture, you learned about the reactive-system architecture --- it is a collection of components, some of which receive inputs (``boundary classes''), some of which hold data structures and algorithms that compute answers (``entity classes''), and some that coordinate input-output between boundary and entity classes (``controller classes'').

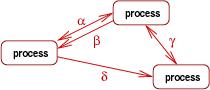

In CIS501, you drew blueprints --- class diagrams and object diagrams --- that showed the architecture of reactive systems; here's an object diagram:

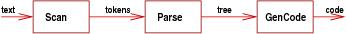

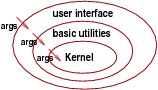

When a complex piece of software is designed, coded, and assembled, some architectural plan must be followed. There are several classic ones, and no doubt you have used one or more of these:

When you work within a problem domain, you must choose the appropriate software architecture for solving the problems in that domain. For this reason, we will survey domain-specific models and languages at the end of this course.

Just like you would be crippled if you go to work and know only one architectural paradigm, you will be crippled if you go to work knowing just one programming paradigm.

One unfortunate aspect of a course-based, four-year, college education in computing is that everyone is in a hurry to learn enough to get a job and pay off their loans. This means the instructors are pressured to structure their courses to provide just enough material so that you can program well in one language (e.g., Java), in one style (e.g., object-oriented), in one software architecture (e.g., reactive system). You are all trained to do exactly the same thing! Then, a person gets a job, finds that they aren't prepared for what the job demands, and they have to undertake remedial training.

The purpose of this course is to broaden your background so you don't fall into this trap.

Let's reconsider the software architectures listed above. How would we code them?

The above examples hinted at these standard programming paradigms:

Do we do everyday tasks this way? Really, no. This programming paradigm comes from the computer hardware --- imperative programming is really all about reading and resetting hardware registers. This style of programming arose when hardware people got tired of rewiring computer hardware for each and every task --- they developed imperative programming notation that represents the wiring diagrams (that is, flow charts --- they are wiring diagrams!).

This paradigm was developed, almost single-handedly, by John McCarthy at M.I.T. in the 1950s, who needed a computer language for natural-language processing. Since words and sentences can be arbitrary length, McCarthy was not happy using Fortran variables and fixed-length arrays. He started working with ``arrays that grew'' --- lists --- and he noticed that recursively defined algebraic equations worked naturally for processing the words in a list.

McCarthy viewed a list as a hierarchical structure: a list has a ''top level'' (its front element) followed by all the levels (elements) underneath. The appropriate way to process a list to write an equation (function) that looks at the top level of the list and then calls itself to look at the remaining, lower levels, much like a cook peels an onion one layer at a time. McCarthy was comfortable with using recursively defined functions because he was trained in mathematics and recurrence equations.

The functional style works best with hierarchical data structures that can be processed one level at a time.

The structure of the puzzle and the clues are literally a program, because they are constraints for which the computer searches for a solution. In the logical/constraint programming paradigm, a program is a set of constraints, written as logical assertions, and a computation finds values for the variables mentioned in the logical assertions so that all the assections are made true. The variables are called logical variables, and they are like the squares of a puzzle. The logical assertions are like the clues to the puzzle. The computation tries various combinations of values to bind to the logical variables, trying to make true the logical assertions. If a combination of values does not succeed in making the assertions true, the computation backtracks (erases some of the values from the logical variables) and tries other combinations.

For example, 2 + 1 = X is a program (constraint) whose output is X = 3, and X = X * X is a program whose output can be either X = 0 or X = 1.

The logical-programming paradigm is useful for solving problems in data bases, knowledge discovery, and learning: a data- or knowledge-base is coded as a set of logical assertions, and a query to the database is a logical assertion with variables that must receive values to answer the query. The paradigm was invented by computer scientists who studied resolution-based theorem proving; they noticed that a side-effect of constructing logic proofs that contained existential quantifiers, ∃X P(X), was that answers, a, for X were computed that made P(a) hold true. From here, it was a small step to adapting logic as a language for specifying computation of answers for such X.

It is best to think of communicative programming as ``collective programming,'' where, like the ant colony, a large collection of actors or objects work together in parallel to solve a problem. The primary advantage is speed within the parallel solution; the primary disadvantage is the huge overhead in coordinating the actors to combine their small parts of the solution. Often, the coordination overwhelms the computation and cancels the advantages of the parallelism.

Smalltalk-style object-oriented programming is a watered-down version of communicative programming, where each actor is provided substantial computational skills and the communication between actors is dramatically simplified to reduce the coordination overhead. Java and C# are modern variations of Smalltalk.

To understand syntax, we will learn a notation for stating syntactic structure: grammar notation. This comes in the next chapter.

To understand semantics, we will learn about semantic domains of expressible, denotable, and storable values. We will learn that these domains consist of primitive and structured values. We will also learn about extension principles that enrich a language based on its semantic domains. Finally, we will see how languages like Java, ML, and Prolog are grown from ``core'' grammars and semantic domains.

To understand pragmatics, we will learn about standard models for computing the semantics of a program in a language. The models might be machines or they might be equations or they might be logic laws. In any case, the models show us how to compute execution traces of programs and understand how a language is useful.

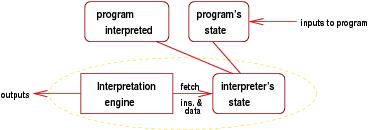

You will reach the point where it will be useful for you to share your knowledge, your libraries, your styles with others. Maybe you will share them with non-software engineers who use your work. At this point, you might wish to develop a language that is specialized to the domain and its problems. Such a language is called a domain-specific language. You use the language to talk about programs and their solutions. If you can state solutions (algorithms) in your domain-specific language, and if a computer can understand your domain-specific language (that is, you write an interpreter for your domain-specific language), then it is a domain-specific programming language.

Most of us will never design a language like C or Java, but many of us have designed or will design domain-specific languages to solve a narrow class of problems in a specialty domain. If you want the language you design to be good quality, you must learn the concepts in this course about paradigms, syntax, and semantics --- you will rely on all three to design a useful domain-specific language.

You have probably hacked code in HTML --- it's a domain-specific language for web-page layout. You might have used a gamer package to create players and an environment for an interactive game; you have thus used a domain-specific language for gaming. And so it goes. Indeed, any nontrivial input format for an application is a domain-specific language, with its own a syntax and semantics --- language design and systems design go hand in hand.